The introduction of speech recognition technology has significantly impacted the way documents are produced, especially healthcare documents. Medical transcription speech recognition platforms are designed to interpret physicians’ dictation and convert it into text that can be stored on a computer. In fact, this technology makes things easier for medical transcription companies as they can edit and produce complete clinical documents faster from these text files.

The introduction of speech recognition technology has significantly impacted the way documents are produced, especially healthcare documents. Medical transcription speech recognition platforms are designed to interpret physicians’ dictation and convert it into text that can be stored on a computer. In fact, this technology makes things easier for medical transcription companies as they can edit and produce complete clinical documents faster from these text files.

Earlier this year, TechCrunch reported that Google is planning to compete directly with voice recognition companies by opening up its speech recognition API to third-party developers. According to a recent report in The Verge, Google is trawling social media network Reddit to improve its voice recognition capabilities, especially the ability to interpret accents. Voice interfaces are becoming more and more critical to Google’s software and hardware. A voice recognition interface that uses a narrow selection of voices cannot respond to accents that fall outside of its frame of reference. According to The Verge, Google is looking to rectify this problem by recruiting Redditors to collect more speech data and uses a third-party company, Appen, to corral a varied range of accented audio samples from the website’s users.

There are high tech apps that turn voice recordings on mobile devices into text that can be sent to computers using different methods. For instance, physicians who have Google Voice can use any phone to call their Google Voice number and leave themselves a message. The voice mail message appears as text in their Google Voice inbox. The text can be saved as a document on the computer. Google’s Cloud Speech API recognizes over 80 languages and variants and transcribes the text of users dictating to an application’s microphone, and allows enabling command-and-control through voice, or transcribing audio files.

Despite the advances in voice recognition technology, many challenges affect its accuracy such as:

- Mediocre voice samples

- Changes in a speaker’s voice due to factors such as mood, health condition, and other changes over time

- Background disturbances

- Changes in the call’s technology, for e.g., digital vs. analogue and upgrades to circuits and microphones

- Misinterpretation of complex medical terms and homophones

- Inadvertent errors in physicians’ speech

These are the reasons why, despite the advances in voice recognition, conventional medical transcription services are still relevant.

Use of voice recognition technology in radiology transcription is fraught with problems. A report in Diagnostic Imaging points out that when using voice recognition and self editing, the radiologist would to rapidly and frequently shift his visual attention. It is quite impossible to view images and type or edit text simultaneously. According to the report, a research study found at least one major error in almost 23 percent or one-fourth of the speech recognition reports, while only 4 percent of reports produced by conventional dictation transcription had errors.

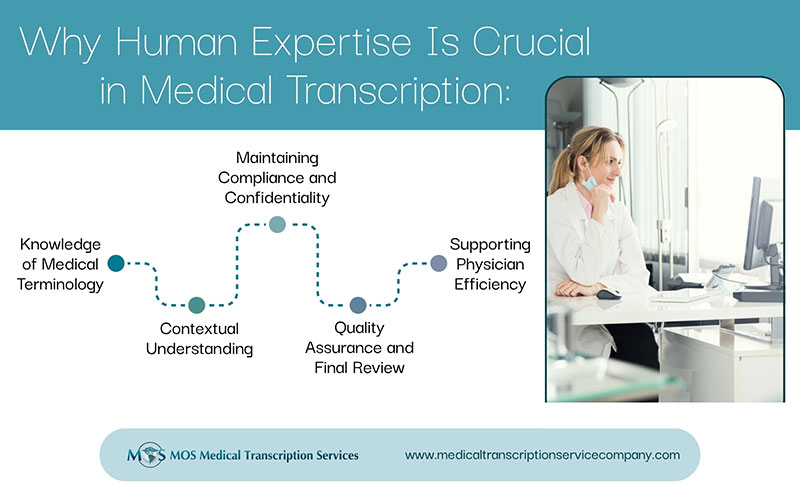

Medical transcriptionists employed by established medical transcription service companies can recognize different accents, and also adapt to speed of speech and background noises. They go through several hours of training using real doctor dictation for a real world experience. Their knowledge base spans medical terminology and jargon, anatomy, physiology, language, grammar, and word-processing programs. These professionals have moved with the times and provide EHR-integrated services. They are skilled editors who can transcribe, edit voice recognition draft reports, and also proofread physician documents on EHRs with top accuracy.